Why Enterprise AI Projects Fail During Implementation

Read Time 17 mins | Written by: Owais Yusuf

Over 85% of enterprise AI projects never make it to production. The ones that do are frequently abandoned within 12 months — not because the models weren't good enough, but because the implementation fell apart around them.

This is the uncomfortable reality that vendors don't put in their pitch decks. Your organization can have the best Generative AI strategy and still fail — if the data infrastructure isn't ready, if change management is an afterthought, or if the team deploying it doesn't understand your business deeply enough to bridge the gap between model output and real-world decision-making.

This post breaks down the 7 real reasons enterprise AI implementations fail, what each failure mode looks like in practice, and what Ontrac Solutions does differently to make sure your AI investment actually delivers.

“Most enterprise AI projects don’t fail because the AI wasn’t smart enough. They fail because the organization wasn’t ready for it — and nobody told them that before they started.”

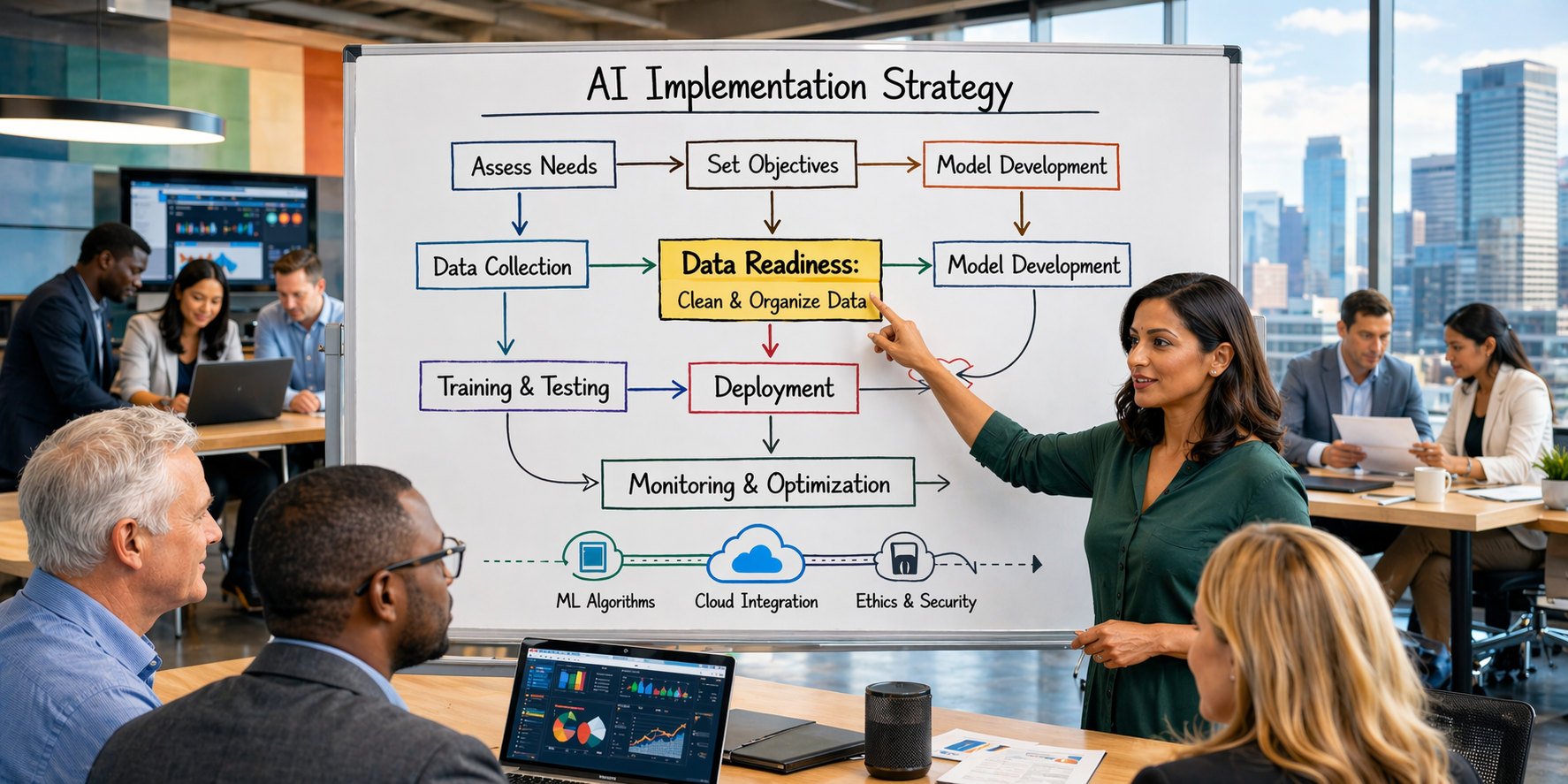

The Data Isn't Ready — And Nobody Checked

AI models are only as good as the data they're trained and run on. Yet most enterprises begin AI projects without auditing their data quality, completeness, and lineage first. The result: a model that performs brilliantly in the sandbox and produces garbage in production.

What This Looks Like in Practice

- ✗Siloed data across departments with no unified schema

- ✗Missing historical data that the model requires for accuracy

- ✗Inconsistent labeling and naming conventions across systems

- ✗No data governance process to maintain quality over time

The Ontrac Fix

We run a data readiness audit before any AI work begins — mapping data sources, identifying gaps, and building the pipelines needed to feed clean, consistent data into your models. AI without this foundation is just expensive guesswork.

The Use Case Was Never Defined Precisely Enough

"We want to use AI to improve efficiency" is not a use case. It's a hope. Enterprise AI projects that start with vague mandates almost always end in disappointment because there's no concrete success metric to work backward from — so nobody agrees on what winning even looks like.

Vague vs. Precise Use Cases

| Vague (Will Fail) | Precise (Will Succeed) |

|---|---|

| "Use AI for customer service" | "Reduce Tier-1 ticket volume by 40% using an LLM-powered agent trained on our knowledge base" |

| "Automate our reporting" | "Auto-generate weekly KPI summaries from our data warehouse, saving 6 analyst hours per week" |

| "Predict customer churn" | "Flag accounts with >70% churn probability 45 days before renewal and route to CSM intervention" |

| "Improve supply chain with AI" | "Reduce stockout events by 25% using demand forecasting models fed from POS + external signals" |

The Infrastructure Can't Support It at Scale

Enterprises often discover — after months of development — that their cloud infrastructure isn't configured for the compute demands of production AI workloads. Models that ran fine in notebooks suddenly hit latency walls, cost overruns, and security gaps when deployed at enterprise scale.

Without a proper cloud architecture and MLOps pipeline, AI systems become brittle. Model drift goes undetected. Retraining is manual. And when something breaks, nobody knows why.

Production AI Infrastructure Checklist

Nobody Prepared the People Who Have to Use It

You can build a flawless AI system and still have it sit unused six months later because the people it was built for don't trust it, don't understand it, or simply weren't involved in shaping it. Change management is consistently the most underinvested part of enterprise AI rollouts.

Warning Signs Your Rollout Will Fail

- ✗End users weren't consulted during design

- ✗No training or onboarding plan for day-1 launch

- ✗The AI replaces workflows without explaining why

- ✗No executive sponsor championing adoption

Our implementation teams embed with your business units — not just your IT department. We co-design workflows with the people who will use the AI daily, so adoption is built in from day one, not bolted on after launch.

The Team Didn't Have the Right Skills to Finish the Job

Enterprise AI requires a rare combination of skills: ML engineering, data engineering, cloud DevOps, prompt engineering, domain expertise, and product thinking. Most internal teams are strong in one or two of these areas — rarely all of them. And most vendor engagements bring in specialists who don't understand the business context.

Skills Required for a Successful Enterprise AI Project

Our AI engineering and data talent — embedded through our Innovation Hub delivery centers in Chicago and Karachi — brings all six skill sets under one engagement model. No gaps. No hand-off failures.

No Governance Framework for Responsible AI

AI systems that produce biased outputs, hallucinate facts, or make unexplainable decisions are a legal and reputational liability. Yet most enterprises launch AI without defining accountability structures, auditability requirements, or escalation paths for when the model gets it wrong.

“Responsible AI isn’t a compliance checkbox. It’s the difference between a system your business can trust and one that blows up in your face the first time it makes an error that matters.”

Our Responsible AI practice builds governance into the architecture — not as an afterthought. That means explainability layers, human-in-the-loop checkpoints, bias testing protocols, and audit trails that satisfy both internal and regulatory scrutiny.

Stuck in Pilot Purgatory — Forever Proving, Never Scaling

The most common place AI dies is between a successful proof of concept and a production deployment. Teams build impressive pilots, leadership gets excited, and then the project stalls — unable to clear the procurement hurdles, security reviews, and infrastructure investments required to scale.

77% of enterprise AI initiatives stall at the pilot stage. The organizations that break through are the ones that design for production from day one — not as an afterthought once the pilot "works."

Pilot Mindset vs. Production Mindset

🚫 Pilot Mindset

- "Let's prove it works first"

- Hardcoded data connections

- No security or compliance review

- Scaling left as a "future problem"

✅ Production Mindset

- "Design for scale from week 1"

- API-first, modular architecture

- Security baked in, not bolted on

- Clear path from MVP to full rollout

How We Make Enterprise AI Actually Stick

Ontrac Solutions has built our AI practice specifically around the failure modes above. Every engagement starts with a structured discovery process that stress-tests your data readiness, business case clarity, infrastructure maturity, and team capability — before a single line of model code is written.

LLM strategy, RAG architecture, AI agents, and responsible AI governance for enterprise deployments.

Explore →Data readiness audits, pipeline engineering, and cloud data warehousing to feed your AI reliably.

Explore →Production-grade infrastructure, MLOps pipelines, and FinOps guardrails for AI at enterprise scale.

Explore →Start the Right Way

Is Your Organization Ready to Implement AI Successfully?

We run a free AI Readiness Assessment — covering your data, infrastructure, use case clarity, and team capability — so you know exactly where you stand before investing a dollar in development.

Book a Free AI Readiness Assessment →